Tidbits

Today’s edition is focused on how we see others, and how employee listening tools can help us see others more accurately in the workplace.

Brilliant in the Basics

Are you seeing others rightly? Yes, I used the word “rightly” because how you see others is dependent on your choices, not just theirs.

Let me share a well-loved story that illustrates my point. It comes from Stephen R. Covey and is captured in his timeless book, Seven Habits of Highly Effective People.

I was riding a subway on Sunday morning in New York. People were sitting quietly, reading papers, or resting with eyes closed. It was a peaceful scene. Then a man and his children entered the subway car. The man sat next to me and closed his eyes, apparently oblivious to his children, who were yelling, throwing things, even grabbing people’s papers.

I couldn’t believe he could be so insensitive. Eventually, with what I felt was unusual patience, I turned and said, “Sir, your children are disturbing people. I wonder if you couldn’t control them a little more?”

The man lifted his gaze as if he saw the situation for the first time. “Oh, you’re right,” he said softly, “I guess I should do something about it. We just came from the hospital where their mother died about an hour ago. I don’t know what to think, and I guess they don’t know how to handle it either.”

Suddenly, I saw things differently. And because I saw differently, I felt differently. I behaved differently. My irritation vanished. I didn’t have to worry about controlling my attitude or my behavior. My heart filled with compassion. “Your wife just died? Oh, I’m so sorry. Can you tell me about it? What can I do to help?”

Everything changed in an instant.

How many times have you made a snap judgment only to be wrong?

Source: Seven Habits of Highly Effective People; https://pi.training/coveys-subway-story-and-the-power-of-perception/#_edn1

What You Might Have Missed from DecisionWise

- Keep up with the DecisionWise fun! Here are some pictures from our events so far this year.

- Struggle with feedback? These 4 killer tips will help you give masterful feedback.

- Swipe: The Science Behind Why We Don’t Finish What We Start was released last week, and reviewers have phenomenal things to say about it.

- If you missed the brilliant webinar How to Finish What We Start hosted by DecisionWise President Matt Wride, featuring Tracy Maylett and Tim Vandehey, we uploaded the full video to our YouTube channel for you.

- Swipe celebration, bowling, and Costa Vida 📚🎳🌯, it doesn’t get better than that!

Featured Discussion: Evidence-Based Employee Listening

In the 80s and 90s, healthcare practitioners began to see that they were using too much “gut” and not enough analysis in treatment. Status quo was leading to poor outcomes.

So, they started focusing on a concept they call evidence-based medicine (EBM). Here is a description of EBM by journalist and physician, Aaron E. Carroll.

“The mission of “evidence-based medicine” is surprisingly recent. Before its arrival, much of medicine was based on clinical experience. Doctors tried to figure out what worked by trial and error, and they passed their knowledge along to those who trained under them. The benefits of evidence-based medicine, when properly applied, are obvious. We can use evidence from treatments to help people make better choices.”

EBM means relying less on instincts and more on objective data and evidence. As with anything, there are disagreements as to how we should implement EBM’s mission. Yet, no one seriously argues that we should stop using EBM to improve medical outcomes.

The need for evidence-based objectivity can also be found in organizations of all sizes. In our field, we agree that evidence-based rigor is needed both in people analytics and in administering employee surveys. As is the case with EBM, it is important to use proven research techniques to bring rigor and objectivity to our processes.

The rest of this article will focus on best practices in survey design and administration. Next time, we will touch on how to make evidence-based decisions using the data we collect.

Here are 12 tips to improve your employee survey design and administration.

Tip #1. Use a core set of validated and time-tested questions. It is tempting to draft questions focused on current initiatives or timely topics. These types of questions should be used occasionally, but add them to the end of your standard survey. Ad hoc questions come and go, but your core questions should be asked every year as they form the backbone of your research database.

Tip #2. Keep it consistent and communicate. Consistency sends the message that you care about your employees’ voice. It also ensures you are building a database of reliable questions that can be used for internal benchmarking and trending.

It’s important to communicate the survey’s purpose, expected outcomes, and who will use the results while also maintaining a reliable schedule. Good pre-survey communication campaigns are a must.

Tip #3. Focus on content standards.

- When asking for employee sentiment, ask a person directly what they think or feel. Avoid asking others what they think someone else believes.

- Measure behaviors and sentiments that have a recognized link to the organization’s performance, mission, values, or strategy

- Avoid language with strong associations, such as asking “woke” or “right-wing” questions.

- Avoid merging two disconnected topics into one question, i.e., a double-barreled question. For example, “Do you think that students should have more classes about history and culture?”

- Keep it simple and as short as possible.

- Ensure face validity. This means that the questions make sense and are relevant to the survey participant.

Tip #4 Pay attention to the four basic principles of good survey science.

Content Standards: Do the questions focus on the right things?

Cognitive Standards: Will participants understand the questions consistently, will they have enough information to answer the questions, and are they willing and able to formulate answers?

Usability Standards: Will the insights and data that are collected help us understand the questions we are trying to understand, and will the results be organized in a format that is useful?

Action Standards: Are we able to do something with the information we have gathered, and can we solve the problems that are uncovered?

Tip #5. Invite diverse communities to participate in the research process. Are questions being vetted from various perspectives? Are minority voices being asked not only how we should measure something, but also being invited to share their opinions on “what” should be measured and evaluated in the first place?

Tip #6. Consider survey frequency. An onslaught of surveys leads to survey fatigue and low participation. Moreover, employee commitment wanes the more we ask of them. Our recommendation is one annual survey of around 40-50 questions and then quarterly pulses that stay under 5-7 questions in length. Studies have shown that daily and weekly surveys lead to a lack of meaningful participation within the survey process.

Tip #7. Decide who participates in the survey. Effective sampling techniques can represent the entire United States with a few thousand people. However, creating the right sample size and transforming data statistically is challenging. Therefore, we suggest sampling everyone, which also sends the message that everyone’s voice matters.

Tip #8. Account for the impact the survey itself will have on the responses and if there are any biases present. When we set out to measure something, the act of measurement will often impact the very system we intend to evaluate. For example, if the survey instrument lists potential concerns and asks respondents to prioritize them, this becomes a type of prompt as to what the organization believes are the valid concerns.

Moreover, it is important to take into account the possibility of recency bias when conducting a survey for an organization that has recently undergone significant positive or negative changes or turmoil. Recency bias refers to the tendency of individuals to recall events or information that have recently happened or are more easily remembered.

Other biases may include consecutive question bias, agreement bias, “Just let me get through this” bias, default respondent bias, questions that come with underlying assumptions, and more.

Tip #9. Make the survey easy to understand. Use simple language with established meanings that are understandable to respondents of varying education and socio-economic levels. Additionally, avoiding double negatives, jargon, acronyms, and emotionally charged language can improve clarity and reduce confusion.

Tip #10. Consider using pilot programs. Pilot programs give you the chance to tinker and dial things in before you try and survey the larger population. You can learn so much when you move from the abstract/theoretical to the application part of the process and doing this with a limited set of individuals means the consequences are less significant.

Tip #11. Use agreement scales. Based on thousands of successful survey administrations over the past 20 years, we have discovered that the most effective questions use a five-point agreement scale. This approach aligns with common benchmarks and avoids the confusion of transforming scales to match benchmark data or changing the scale and question style for participants.

Tip #12. Maintain confidentiality. Survey designers should understand the difference between confidentiality and anonymity. Do your best to maintain confidentiality. Most surveys are confidential, not truly anonymous. Some end users can see who said what. More on this next time, but remember to only report or share data that has been aggregated or has been anonymized.

Of course, if you don’t want to handle all these details, then turn it over to a third-party, like DecisionWise!

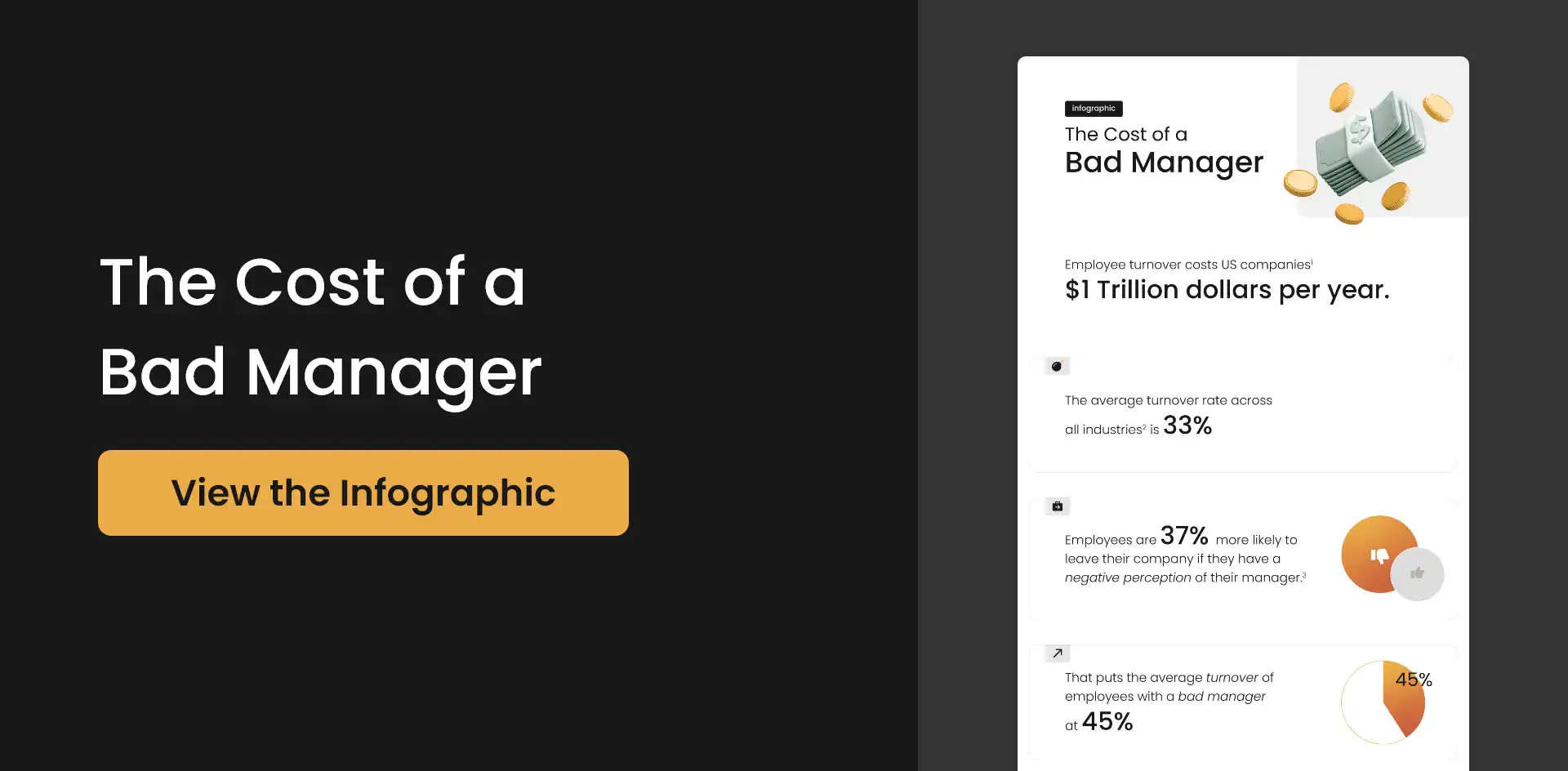

So, without good survey technique, your listening tools become mere polls. Polls are useful but limited in their scope and utility. Understanding the demarcation between polls and survey research is important in helping us maintain high research standards.

What’s Happening at DecisionWise

UPCOMING WEBINAR

HR News Roundup

- Predictive Analytics Can Help Companies Manage Talent

- Efficiency and Engagement: The Great Balancing Act of Workforce Tech

- Ways AI is Changing HR Departments

- Do You Know What Your Team Needs? Here Are 5 Ways to Find Out

- 10 Reasons Companies Consider Using Big Data In HR Decision-Making

- Can a Robot Tell You That an Employee is About to Quit?

- Survey: ESG Strategies Rank High with Gen Z, Millennials